By [Alon Gal] | March 2025

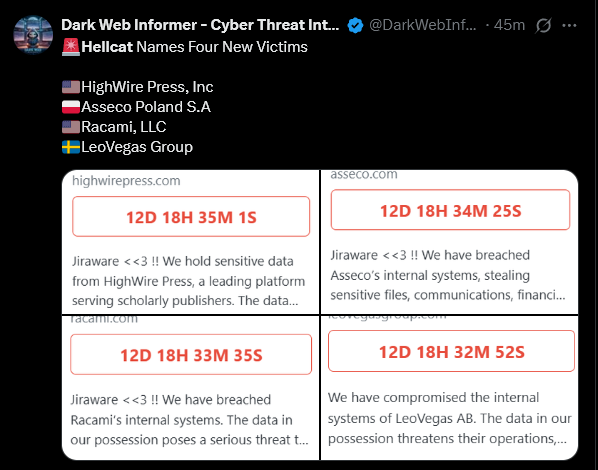

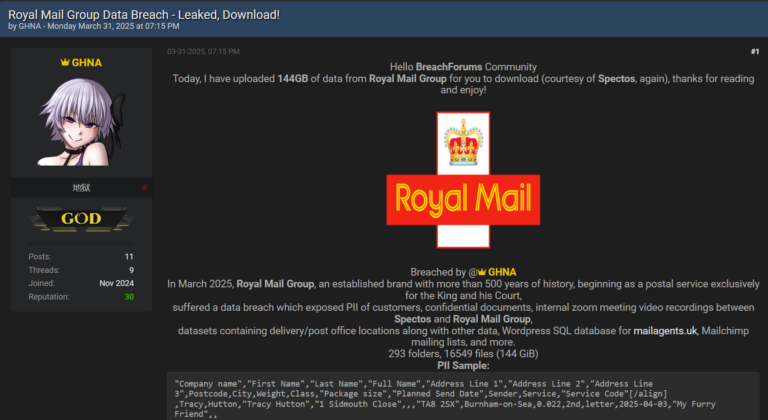

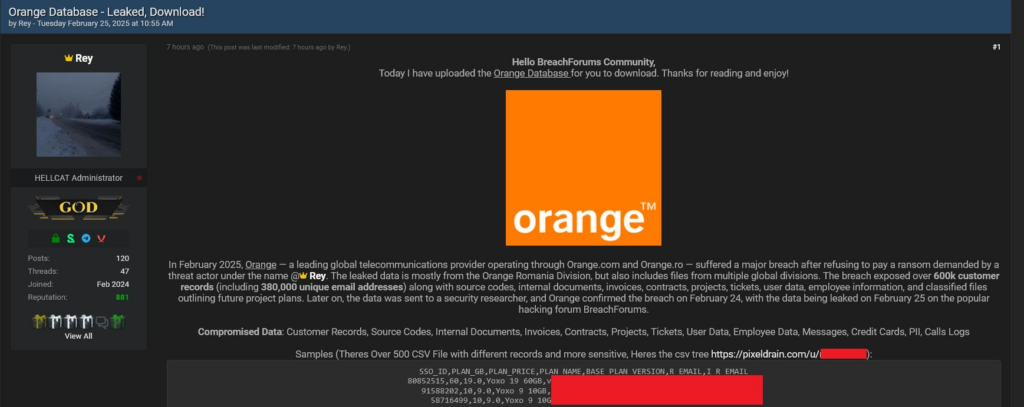

Breaches like Orange, Schneider Electric, and Telefonica often start with a simple infostealer stealing JIRA or Confluence credentials (signature HELLCAT group initial attack vector). From there, it’s a straight shot to pulling heaps of data from internal servers. Companies tend to downplay these leaks—“no big deal, just some files”—while the data floats around cybercrime circles, waiting for someone to dig in.

The Orange leak, for instance, weighs in at 7.19GB and splits into two main chunks: “issues” and “files.”

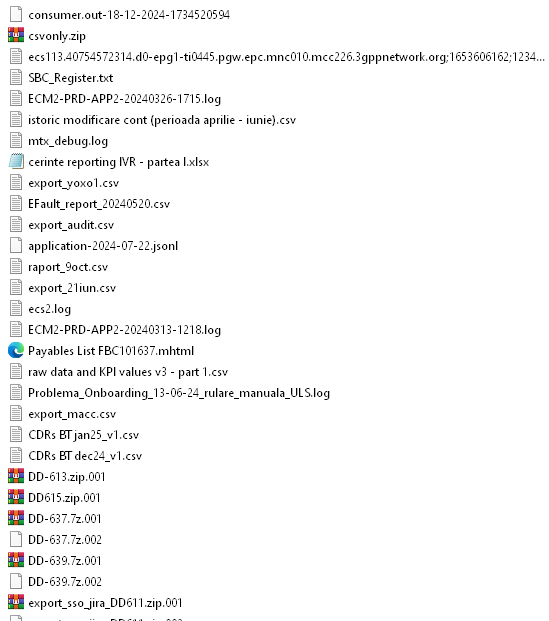

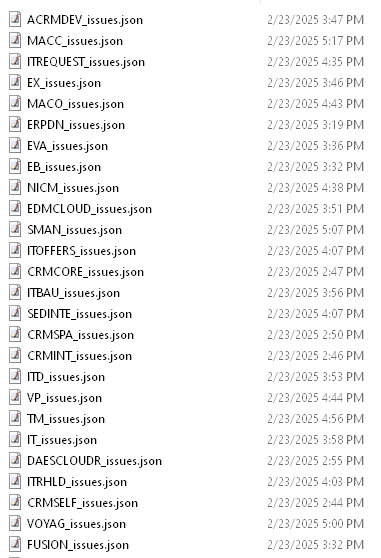

The “files” folder holds 8,601 items—CSVs, images, zips, .msg files, you name it—ripped right from Orange’s JIRA server:

Then there’s “issues,” with 235 JSON files breaking down employee-reported problems: GDPR_issues.json (data compliance risks), SVM_issues.json (IT security gaps), CCCAP_issues.json (credit card fraud concerns), and more. It’s a potential goldmine, but the sheer messiness of it all means most hackers barely scratch the surface. The good stuff stays buried.

That’s where AI comes in. It’s not just a tool—it’s a shift in how hackers can tackle these sprawling leaks. Hudson Rock took a practical look at the Orange dump through a hacker’s lens, using AI to cut through the clutter. Here’s what we found it can do, and why it matters.

How AI Unlocks the Value in Leaks Like Orange

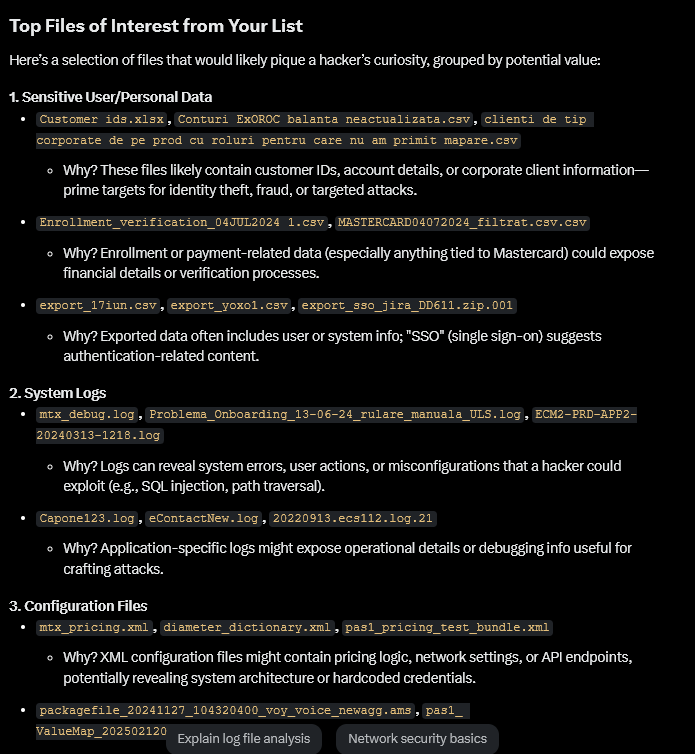

1. Prioritizing the High-Value Targets

Sifting through 8,601 files by hand is a non-starter. We fed the Orange file list into an AI and asked it to flag what’s worth checking out. It quickly highlighted files like MASTERCARD04072024_filtrat.csv (payment-related data), export_sso_jira_DD611.zip.001 (possible SSO credentials), and mtx_debug.log (system logs). Instead of guessing, hackers get a shortlist of where the real value lies.

2. Finding PII and Secrets Fast

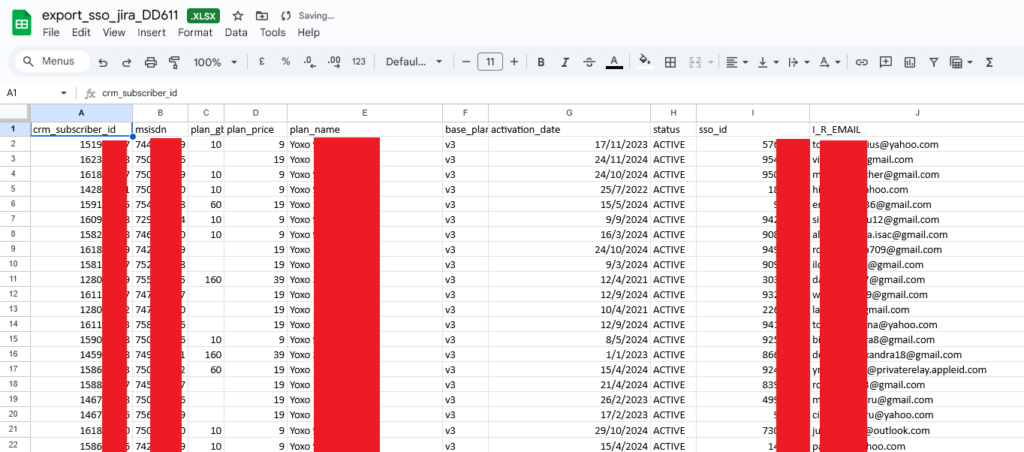

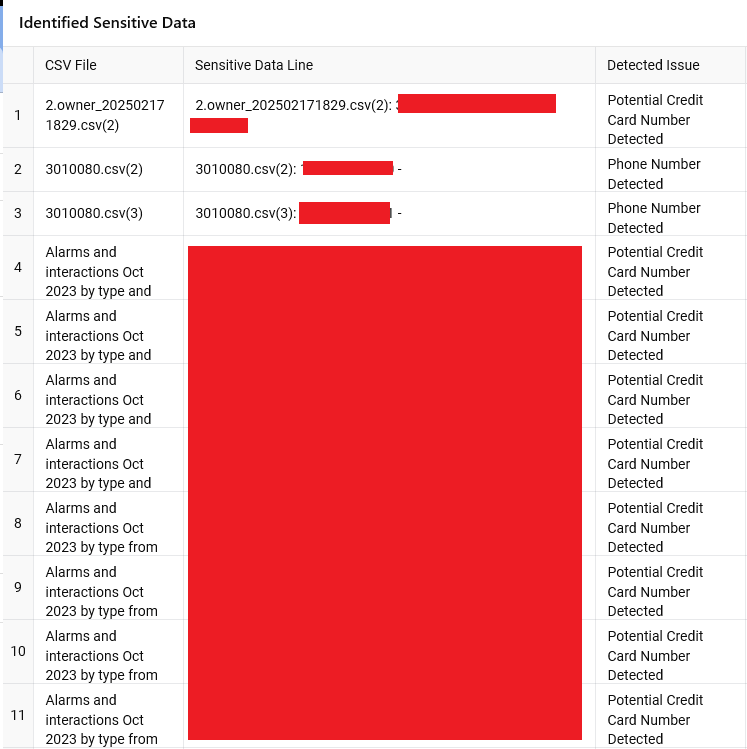

Hackers thrive on personal data—names, numbers, emails—for phishing or fraud. AI can scan bulky CSVs like raw data and KPI values v3 – full – shortened.csv or BDEBT_Reconnections_month NDW.csv and pull out PII, API keys, or other sensitive pieces of data in minutes. What used to be a tedious manual hunt is now a quick automated sweep, stripping away the noise.

In the following example we provided the AI with the first few lines of every CSV in the dataset, this is just a small portion of the output it generated:

3. Spotting Blackmail Material

Companies dread leaks that expose screw-ups, and AI can zero in on that. Feed it files like GDPR_issues.json or Security Assessment Report – WFM Tool 2023.pdf, and it’ll flag compliance issues, potential fraud, or internal problems that could be leveraged for blackmail. A hacker could easily turn that into a “pay us or we spill” threat.

4. Fueling Corporate Espionage

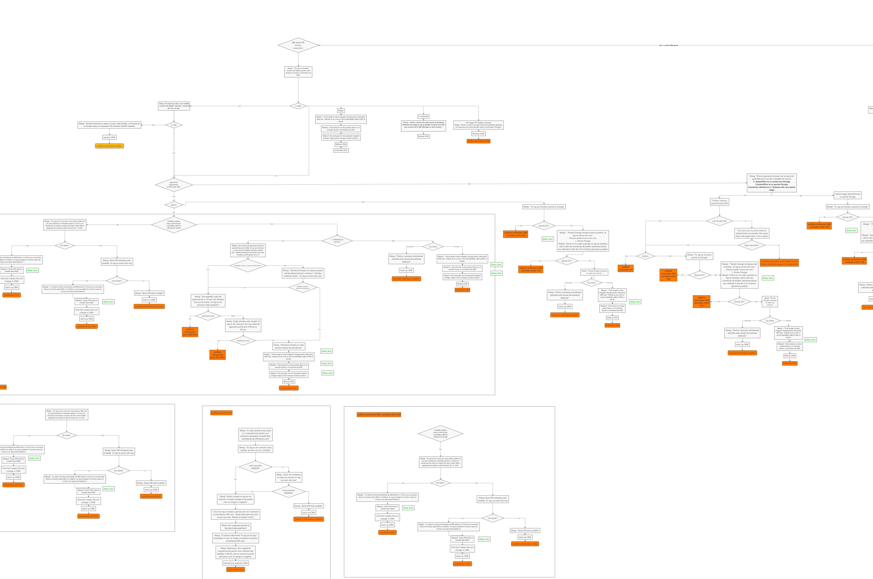

Internal docs can give competitors an edge, and AI makes it easier to exploit them. Take SIM Change Flow v4.jpeg—a diagram of Orange’s SIM swap security. AI can analyze it, highlight weak points, and help rivals replicate processes or hackers exploit flaws for SIM swaps. Files like mtx_pricing.xml (pricing logic) or APIA.V_NDW_APIA_EMPLOYEES_addTV.csv (employee data) could also hand over operational insights.

5. Automating Exploit Discovery

Logs like mtx_debug.log or Problema_Onboarding_13-06-24_rulare_manuala_ULS.log might reveal system errors or misconfigurations. AI can parse these for patterns—like unpatched vulnerabilities or exposed endpoints—that hackers can target without breaking a sweat.

The Old Approach Doesn’t Cut It Anymore

Historically, hackers treated big leaks like this as a quick smash-and-grab—snag a few files, maybe some credentials, and move on. The volume was too intimidating to dig deeper. But AI changes that. It’s like handing hackers a filter that turns a chaotic pile of data into a curated list of actionable intel. For Orange, that means overlooked files suddenly become weapons.

The Orange leak shows how AI flips the script. What was once a daunting haystack is now a neatly sorted stack of needles—data that hackers can weaponize faster and smarter than ever. Companies might shrug off these breaches, but with AI in play, the stakes are higher. Sensitive customer info, internal flaws, and competitive secrets aren’t just sitting there anymore—they’re being actively mined. The days of leaks gathering dust in forums are over; AI’s making sure nothing stays buried.

Where We’re Headed: AI as the Hacker’s Co-Pilot

This is just the start. As AI gets sharper—think better natural language processing, pattern recognition, or even generative models—hackers won’t just analyze leaks; they’ll weaponize them in real time. Imagine AI churning through a fresh dump, spitting out phishing templates, exploit scripts, or blackmail pitches on the fly. Tools like ChatGPT are already accessible; give it a year or two, and we’ll see custom AI rigs built for cybercrime, sold on the dark web like infostealers are today. Companies will need to rethink their “not a big deal” stance, because AI’s turning every leak into a precision strike. Buckle up—it’s about to get messy.

To learn more about how Hudson Rock protects companies from imminent intrusions caused by info-stealer infections of employees, partners, and users, as well as how we enrich existing cybersecurity solutions with our cybercrime intelligence API, please schedule a call with us, here: https://www.hudsonrock.com/schedule-demo

We also provide access to various free cybercrime intelligence tools that you can find here: www.hudsonrock.com/free-tools

Thanks for reading, Rock Hudson Rock!

Follow us on LinkedIn: https://www.linkedin.com/company/hudson-rock

Follow us on Twitter: https://www.twitter.com/RockHudsonRock